About the article

DOI: https://www.doi.org/10.15219/em111.1722

The article is in the printed version on pages 32-45.

Download the article in PDF version

Download the article in PDF version

How to cite

Sahani, C., Rawat, N., & Bhatnagar, M. (2025). Decoding digital learning: analysing antecedents of behavioural intention and e-learning adoption. e-mentor, 4(111), 32-45. https://www.doi.org/10.15219/em111.1722

E-mentor number 4 (111) / 2025

Table of contents

- Introduction

- Hypothesis Generation based on Literature Review and Theoretical Background

- Development of Conceptual Model

- Research Methodology

- Data Analysis

- Managerial Implications

- Conclusion and Future Scope

- References

About the author

Decoding Digital Learning: Analysing Antecedents of Behavioural Intention and E Learning Adoption

Chahat Sahani, Navneet Rawat, Mukul Bhatnagar

Abstract

This study seeks to identify the determinants of behavioural intention (BI) and to examine how BI influences e learning adoption (ELA) behaviour among students. The article investigates the impact of five key factors – Accessibility, Government Policy, Organisational Support, Instructor Attitude, and Technostress – on intention to use and adopt e learning platforms. A validated questionnaire was administered to a heterogeneous sample of 290 participants, and SmartPLS 4.0 was used for the analysis. The findings indicate that Technostress, Government Policy, and Organisational Support have a substantial and positive influence on intention and acceptance of the virtual learning platform, with Technostress emerging as the most influential factor. By contrast, Accessibility and Instructor Attitude are not significant, suggesting that access to digital infrastructure and e learning platforms is generally good, and that students consistently report positive instructor attitudes; as a result, there is insufficient variance for these variables to meaningfully differentiate outcomes in the statistical model. The results show a strong effect of BI on e learning adoption, with a large effect size. In addition, the model explains 53% of the variance in behavioural intention and 49% of the variance in e learning adoption, indicating strong explanatory power. Notably, Technostress has the greatest impact, and its effects can be reduced through immersive and engaging sessions. This suggests that Technostress may sometimes function as a ‘challenge stressor’ rather than a ‘hindrance stressor’, encouraging active engagement rather than avoidance. These issues should be integrated into strategic decision-making to enhance digital education outcomes through a positive, engaging virtual learning system.

Keywords: e learning, Learning Management System, PLS-SEM, antecedents, enablers, barriers, online education

Introduction

Online education combines classroom teaching with information and communication technologies (ICTs) and is widely regarded as an effective means of sharing knowledge (Baber, 2021). It is also referred to as virtual, distance, electronic or mobile learning, and has developed alongside the expansion of information technology (Singh & Thurman, 2019). The term is often used interchangeably with virtual education and e learning platforms. Its widespread adoption has improved communication and teaching for both businesses and individuals, indicating considerable potential for training and education. The growing tendency for learners to choose online courses is a notable achievement of online education (Alkhalaf et al., 2012). This can help students manage their time and balance study with work, and it is now considered an integral part of the education sector. Many higher education institutions have adopted learning management systems (LMSs) to create, manage and deliver educational materials, monitor student progress, and administer assignments and quizzes, thereby facilitating interaction between learners and educators (Kim et al., 2021). Previous studies have also indicated that the implementation of LMSs has substantially transformed universities and enhanced their educational offerings (Dhapte, 2025; Singh, 2020).

Many researchers (Ahmad et al., 2018; Anwar et al., 2020; Hassanzadeh et al., 2012) have examined the features associated with successful e learning in order to maximise the benefits and quality of virtual education systems. These features are considered from both human and non-human perspectives, combining technological aspects such as learning management systems (LMSs) with their users, namely educators and students.

Anwar et al. (2020), Lan et al. (2021), and Zhao and Xue (2023) identify key motivators and challenges associated with e learning, or education delivered fully online. Enablers of e learning include quality parameters, learners’ experience and achievement level, attitude and competence level, perceived usefulness, and intention to accept virtual education. Conversely, several variables have been investigated as potential barriers to the wider acceptance of virtual learning, including technostress, the digital divide, accessibility issues, resistance to change, limited digital literacy, and financial concerns (Kong et al., 2014).

The instructor, course design, student characteristics, university support, and information technology are five key dimensions of online education. Rodríguez-Ardura and Meseguer-Artola (2016) validated that instructors’ efforts are critical for the effective application of information systems and the success of online learning. Hou et al. (2022) and Teo et al. (2008) proposed instructor characteristics that influence effectiveness, such as expertise, commitment, status, priorities, fundamental values, training, IT competence, mindset, and teaching style. Furthermore, El Alfy et al. (2017) note that students’ characteristics – including expectations, engagement, media competence, personal competence (self-regulated learning), motivation, course compatibility, flexibility, and satisfaction – also contribute to e learning adoption. Another pillar is the institution’s characteristics, including organisational leadership, readiness, institutional policy, staff training, organisational culture, technical support facilities, and change management systems. Information technology attributes encompass information quality, ease of use, system quality, accessibility, security, privacy, and service quality (Teo et al., 2020). Lastly, course design includes course structure and content, information quality, subject area, knowledge tests, competency tests, feedback, quizzes, and assessment modes and levels of difficulty (Karimian & Chahartangi, 2024; Kong et al., 2014; Nguyen et al., 2024). Considering all of the above variables, the current study sets the following research objectives:

RO1 – To study the impact of accessibility on behavioural intention of learners to use e learning platforms.

RO2 – To test the effect of organisational support on the behavioural intention of learners to embrace e learning.

RO3 – To determine the influence of government policy to gauge the behavioural intention of learners towards the use of e learning.

RO4 – To assess the role of instructor attitude in creating behavioural intention of learners.

RO5 – To examine how Technostress affects behavioural intention for e learning in learners.

RO6 – To identify the influence of behavioural intention on actual adoption of e learning platforms.

RO6 – To study the impact of accessibility on behavioural intention of learners to use e learning platforms.

RO7 – To generate and test a conceptual framework that incorporates institutional, psychological, and contextual antecedents to explain e learning adoption.

Hypothesis Generation based on Literature Review and Theoretical Background

Adoption of online education in HEIs is rooted in several complementary theoretical foundations. Perceptions of practicality, effectiveness, ease, and convenience in virtual education are commonly examined through established frameworks. Davis’s (1989) Technology Acceptance Model (TAM) focuses on perceived usefulness and perceived ease of use as predictors of adoption. By contrast, UTAUT incorporates facilitating conditions and social influence (Venkatesh et al., 2003). Accessibility aligns with perceived ease of use (TAM) and facilitating conditions (UTAUT). Where there is access to platforms (low bandwidth requirements, mobile friendly design, intuitive interfaces, interactive features), the likelihood of adoption is higher.

Nevertheless, instructor attitude can be mapped to the social influence construct in UTAUT and to the educational and emotional support elements of Social Support Theory (House, 1981). Instructors’ support (encouragement, feedback, and guidance) has been reported to positively affect students’ satisfaction and their willingness to continue using e learning tools. This support may be direct (through teaching behaviour) or indirect (by creating a positive learning context).

Government support enhances enabling conditions through infrastructure, subsidies, or requirements that minimise barriers to e learning. This increased support is expected to improve users’ behavioural intention to use the e learning platform (Kaur & Sehajpal, 2025; Sehajpal et al., 2025; Singh & Sehajpal, 2025). The theory supports the proposed hypotheses that government policy relates to intention through constructs such as Social Influence (norms, perceived pressure) and Facilitating Conditions (availability of resources), which are conducive to positive behavioural intention and use.

Institutional Theory also explains how government policy can act as a coercive pressure and as normative organisational support in shaping intention to use e learning (DiMaggio & Powell, 1983). Institutional Theory focuses on how external forces influence organisations and individuals (e.g., government regulations, policies, and mandates that incentivise particular behaviours to secure legitimacy and compliance).

Technostress, on the other hand, captures negative pressures and is commonly treated as a deterrent to adoption behaviour. Technostress is grounded in the Stress–Strain–Outcome framework (Koeske & Koeske, 1993), which conceptualises technology overload, complexity, and continual updates as stressors that lead to strain (fatigue, frustration, dissatisfaction), which may reduce learners’ intention to adopt or use e learning.

The discussion above provides a theoretical rationale for examining variables such as Accessibility, Government Policy, Organisational Support, Instructor Attitude, Technostress, and E learning Adoption within a single framework. Together, these elements form a multi-level ecosystem that influences technology adoption in learning environments. Based on TAM, UTAUT, Institutional Theory, Social Support Theory, and the Stress–Strain–Outcome framework, technology adoption is shaped not only by individual user perceptions (e.g., instructor attitude and technostress) but also by structural enablers (e.g., accessibility and government policy) and organisational conditions (e.g., institutional support). Such models suggest that psychological, environmental, and institutional factors jointly determine behavioural intention and actual usage. The literature relevant to the constructs used in the current study is reviewed below:

Accessibility and Psychological Framework to Accept Virtual Platforms for Education

With a range of technologies and devices used to access learning resources – mobile phones, laptops, tablets, and desktop computers – e learning has experienced rapid growth. Making e learning accessible also depends on integrating technologies such as speech-to-text, alternative text for images, captions, screen readers, and keyboard navigation. Technology has fundamentally transformed education, teaching methods, and learning environments (Kithsiri et al., 2018). Traditionally, educational resources were not easily accessible to many people, and limited collaboration and information exchange were also observed among students seated in the same classroom (Rodríguez-Ardura & Meseguer-Artola, 2016). Many learning tools are now available in multiple formats – text, audio, video with subtitles, and transcripts – allowing students to select what best fits their skills and preferences and making learning more inclusive (Wen et al., 2008). Studies have found that accessibility and overall usability depend critically on logical structure, clear instructions, and straightforward navigation (Lyukevich et al., 2020). Accessible e learning systems stimulate greater engagement and participation among all students, including those from underprivileged or underrepresented groups (Gibreel & Abdalla, 2024). System accessibility influences perceptions of intuitiveness and manageability of virtual platforms. Users are likely to view such platforms as useful and requiring less effort when they are easy to access, navigate, and use. Thus, we hypothesise:

H1: Accessibility has a significant impact on the likelihood of adoption of virtual platforms.

Organisational Support and Willingness for Embracing Virtual Platform

Learners’ intention to implement and use online education platforms in a dynamic environment is strongly influenced by organisational support (Alajmi et al., 2018). Dedicated leadership, learning opportunities, sufficient funding, and a supportive culture all contribute to this support. Leadership, resources, technical assistance, and training are directly instrumental in achieving positive outcomes. In addition, reducing barriers, addressing resistance, and shaping attitudes can also improve acceptance. Successful and comprehensive endorsement of online education across institutions depends on strong, visible, and consistent organisational support (Alkhalaf et al., 2012; Rowell, 2010). By removing barriers and enabling students and staff to engage, supportive environments foster a culture that values technology. Thus, we hypothesise.

H2: Organisational Support has a positive relationship with propensity for adoption of virtual platforms

Government Policy and Willingness for Adopting Virtual Platform

The extensive implementation of the NEP, the establishment of virtual laboratories, financial assistance policies for acquiring digital devices, and unified platforms such as Diksha and Swayam have supported wider acceptance of digital learning and, in turn, contributed to changes in social norms. This social influence can shape behavioural intention through learners’ perceptions of support and approval from society and peers (Elameer, 2021). Integrating digital learning into the curriculum fosters awareness and a preference for interacting with digital tools. Enabling conditions, including guidelines that ensure digital literacy training, adequate resources, and technical assistance, are known to influence behavioural intention (Kanwal & Rehman, 2014). Consequently, learners who perceive themselves as equipped and supported in using virtual educational tools may be more motivated. Thus, we hypothesise:

H3: Government Policy has a significant relationship with behavioural intention to use the e learning platform.

Instructor Attitude and Readiness to Embrace Virtual Platform

Instructor Attitude is widely regarded as an important variable in determining intention to use online and digital learning mechanisms (El Alfy et al., 2017; Yi et al., 2024). A positive attitude towards e learning is likely to increase an instructor’s willingness to adopt and use these platforms in their teaching. Willingness to engage may be shaped more by attitude than by convenience; among experienced instructors, perceived usefulness, satisfaction, and positive affect indicate a favourable attitude, which in turn predicts stronger intention to use e learning. Institutional and social factors also influence educators’ attitudes. Accordingly, it is hypothesised that:

H4: Instructor Attitude is significantly related to behavioural intentions to use the online learning platform.

Technostress and Willingness for Embracing Virtual Platform

Technostress refers to stress or anxiety associated with the use of digital technologies and can undermine willingness to use digital educational platforms (Tarafdar et al., 2011). Several forms of technostress have been identified in the literature, including techno-invasion (disturbances to personal life), techno-overload (being forced to work faster), and techno-uncertainty (feeling left behind by rapid technological change). Several studies show that greater technostress is associated with lower intentions to use, or continue using, e learning platforms (Penado Abilleira et al., 2020). Students who experience technostress may feel overwhelmed, anxious, and dissatisfied, which reduces their motivation to engage with online learning resources (Chu & Chen, 2016). Reduced participation, lower memory retention, and anxiety resulting from technostress can influence academic achievement and satisfaction with online education (Lal et al., 2024). Students experiencing technostress may also be less likely to participate in group projects or communicate with instructors or peers. Based on these findings, we hypothesise:

H5: Technostress has a significant relationship with behavioural intention to use e learning platforms

Behavioural Intention to use E learning Platform Relationship with Adoption Behaviour

Behavioural intention is widely acknowledged as a crucial determinant of adoption behaviour, thereby enhancing the effectiveness of virtual learning methods (Al-Hunaiyyan et al., 2021). Numerous studies and theoretical models (including TAM and UTAUT) consistently indicate a strong relationship between the propensity to adopt and the actual adoption of online education platforms (Jameel et al., 2022; Xian, 2019). Behavioural intention reflects learners’ readiness or willingness to use virtual learning platforms in the knowledge acquisition process and is frequently identified as the main antecedent of actual adoption. Strong intentions are likely to translate into actual use behaviour. Thus, we hypothesise:

H6: Behavioural intention has a significant relationship with actual usage of e learning platforms

Only a limited number of studies have investigated these constructs within a single empirical framework, particularly in developing countries where infrastructure and pedagogical practices may differ. The current literature seldom addresses how these variables collectively influence students’ perceptions. These gaps underscore the need for an integrated analysis that evaluates the relative contribution of technostress, accessibility, government policy, organisational support, and instructor attitude to students’ e learning experience. The present study seeks to address this gap by developing a conceptual model and providing empirical evidence, thereby extending understanding of factors that shape effective and sustainable online learning.

Development of Conceptual Model

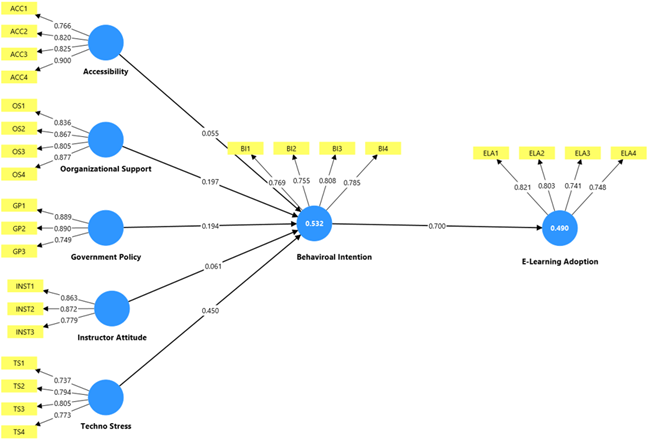

A conceptual model of e learning adoption has been developed using the variables shown in Figure 1.

Figure 1Conceptual Model

Source: authors’ own work.

Research Methodology

Quantitative methods were used to test the hypotheses in the conceptual framework. SmartPLS 4 (Ringle et al., 2024) was used to assess the measurement and structural models. Data collected through surveys of users of an e learning educational platform were analysed using PLS-based Structural Equation Modelling (PLS-SEM). This is a robust quantitative technique for evaluating complex relationships among latent constructs, particularly in exploratory work and in social science research involving non-normal data. The analysis includes two stages: first, the measurement model is assessed (i.e., the relationships between indicators and constructs); second, the structural model is assessed (i.e., the hypothesised causal relationships between constructs). Following data screening for outliers, multicollinearity, and average variance extracted (AVE) (Coluci et al., 2015), the approach prioritises maximising explained variance in dependent variables rather than overall model fit indices. The methodology was selected to ensure a robust basis for generating insights into the facilitators and barriers associated with e learning platforms. Measurement items were drawn from an extensive review of the literature (Hwang & Kim, 2022; Karimian & Chahartangi, 2024; Rahayu et al., 2021). The questionnaire was validated by industry experts and professionals before responses were collected, as shown in Table 1. Overall, this approach provides a credible basis for generating results that extend the existing evidence base.

Table 1Survey Questionnaire

| Construct | Code | Measurement Item | Reference |

| Accessibility | ACC1 | I own a suitable device for e learning | Ssemugenyi & Nuru Seje, 2021 |

| ACC2 | I have reliable internet access for e learning | Alyoussef, 2022 | |

| ACC3 | These platforms are easy to use | ||

| ACC4 | LMSs are compatible with mobile devices | ||

| Organisational Support | OS1 | My institute provides sufficient IT infrastructure for e learning | Tom et al., 2019 |

| OS2 | University leadership allocates sufficient funds for e learning tools | Herath et al., 2015 | |

| OS3 | Technical support for e learning is readily available | ||

| OS4 | My institute regularly updates digital learning technologies | ||

| OS5 | Administrative processes support e learning | ||

| Government Policy | GP1 | The government provides grants for e learning infrastructure | Singh et al., 2021 |

| GP2 | National policies mandate the integration of digital education | Elameer, 2021 | |

| GP3 | Government agencies monitor e learning quality standards | ||

| GP4 | Government initiatives promote faculty training, development, and accessibility | ||

| Instructor Attitude | INST1 | My instructor is confident in delivering content through an online platform | Ssemugenyi & Nuru Seje, 2021 |

| INST2 | My instructor enjoys using the e learning system for teaching | Hernández-Ramos et al., 2014 | |

| INST3 | My instructor promotes active student participation | Sangwan et al., 2021 | |

| Technostress | TS1 | Technology compels me to work faster | Tarafdar et al., 2011 |

| TS2 | I am worried about technology interfering with my personal time | Penado Abilleira et al., 2020 | |

| TS3 | Sometimes it is hard to learn how to use new educational technologies. | ||

| TS4 | Technology fails me when I need it most | ||

| Behavioural Intention | BI1 | I will use online education tools frequently in the future | Yang & Qian, 2025 |

| BI2 | I believe that e learning enhances students’ engagement | Ameen et al., 2019 | |

| BI3 | I am comfortable adopting new e learning technologies | Jamil, 2017 | |

| BI4 | I intend to recommend adoption of the online education platform to my peers | ||

| E learning Adoption | ELA1 | I find online education tools useful for improving my learning performance | Al-Maroof et al., 2021 |

| ELA2 | Understanding virtual learning tools is easy for me | Shah et al., 2025 | |

| ELA3 | I possess the necessary resources for the learning management system | Mashroofa et al., 2023 | |

| ELA4 | My peer group influences me to use the virtual learning system |

Source: authors’ own work.

Data Analysis

A dataset was compiled from responses provided by 290 participants via a structured questionnaire designed to capture a heterogeneous audience, thereby supporting scalability and efficiency, as shown in Table 2. Convenience sampling was used, and respondents were higher education students in the State of Uttaranchal. The sample was drawn from 33 universities, comprising 11 state universities, one central university, and 18 private universities. Participation was voluntary; informed consent was obtained online and completed before data collection began, and no personally identifiable information was collected. Given the limitations of convenience sampling – including possible sample bias and variation in responses – preventive measures were implemented to mitigate these issues.

Table 2Demographic Data

| User’s Age | Frequency | Percentage | Educational Qualification | Frequency | Percentage |

| Less than 25 | 77 | 26.5 | Undergraduate | 69 | 23.8 |

| Between 25 to 35 | 174 | 60 | Postgraduate | 128 | 44.1 |

| More than 35 | 39 | 13.5 | Ph.D./Others | 93 | 32.1 |

| Gender | Frequency | Percentage | Experience | Frequency | Percentage |

| Male | 102 | 35.2 | Positive | 167 | 57.5 |

| Female | 188 | 64.8 | Negative | 123 | 42.4 |

Source: authors’ own work.

Measurement Model Assessment

Following Taber (2018), assessment of the outer model examines construct reliability and internal consistency through indicator loadings (λ), composite reliability (CR), and Cronbach’s alpha (α), with values above 0.7 generally considered acceptable; CR is often regarded as the more appropriate reliability metric. Convergent validity is assessed using the average variance extracted (AVE), which should exceed 0.5, as shown in Table 3. Campbell and Fiske (1959) suggested assessing discriminant validity to confirm that constructs are empirically distinct, using approaches such as the HTMT ratio, cross-loadings, and the Fornell–Larcker criterion; recommended thresholds typically lie between < 0.850 and 0.90, as shown in Tables 4 and 5. The measurement model meets the reliability and validity criteria reported in Table 3.

Table 3Factor Loading, Reliability, Validity and Collinearity of Outer Model

| Alpha | CR | AVE | VIF | ||

| ACC1 | 0.766 | 0.85 | 0.898 | 0.687 | 1.746 |

| ACC2 | 0.82 | 2.132 | |||

| ACC3 | 0.825 | 1.935 | |||

| ACC4 | 0.9 | 2.378 | |||

| BI1 | 0.769 | 0.785 | 0.861 | 0.608 | 1.57 |

| BI2 | 0.755 | 1.476 | |||

| BI3 | 0.808 | 1.635 | |||

| BI4 | 0.785 | 1.574 | |||

| ELA1 | 0.821 | 0.784 | 0.86 | 0.607 | 1.655 |

| ELA2 | 0.803 | 1.65 | |||

| ELA3 | 0.741 | 1.502 | |||

| ELA4 | 0.748 | 1.503 | |||

| GP1 | 0.889 | 0.81 | 0.882 | 0.715 | 1.729 |

| GP2 | 0.89 | 2.099 | |||

| GP3 | 0.749 | 1.685 | |||

| INST1 | 0.863 | 0.79 | 0.877 | 0.704 | 1.795 |

| INST2 | 0.872 | 1.826 | |||

| OS1 | 0.836 | 0.868 | 0.91 | 0.716 | 1.961 |

| OS2 | 0.867 | 2.463 | |||

| OS3 | 0.805 | 1.811 | |||

| OS4 | 0.877 | 2.35 | |||

| TS1 | 0.737 | 0.782 | 0.859 | 0.605 | 1.376 |

| TS2 | 0.794 | 1.814 | |||

| TS3 | 0.805 | 1.597 | |||

| TS4 | 0.773 | 1.633 |

Source: authors’ own work from PLS-SEM software.

The reliability measures (CR, loading values, and Cronbach’s alpha) all exceed 0.70, indicating that items such as ACC1, ACC2, ACC3, and ACC4 collectively represent the Accessibility latent construct effectively; the same criteria are met by indicators for the other constructs, establishing internal consistency. AVE reflects the proportion of variance captured by the construct relative to measurement error and provides evidence of convergent validity. Table 3 shows that the AVE for ACC, GP, OS, TS, and INST exceeds 0.50, meeting the validity threshold.

Table 4Discriminant Validity: Using Cross Loadings

| ACC | BI | EL | GP | INST | OS | TS | |

| ACC1 | 0.766 | 0.252 | 0.169 | 0.628 | -0.189 | 0.428 | 0.138 |

| ACC2 | 0.820 | 0.208 | 0.269 | 0.401 | -0.236 | 0.383 | 0.089 |

| ACC3 | 0.825 | 0.320 | 0.328 | 0.403 | -0.097 | 0.459 | 0.243 |

| ACC4 | 0.900 | 0.377 | 0.363 | 0.475 | -0.164 | 0.508 | 0.239 |

| BI1 | 0.282 | 0.769 | 0.479 | 0.367 | 0.079 | 0.472 | 0.515 |

| BI2 | 0.314 | 0.755 | 0.523 | 0.396 | 0.167 | 0.481 | 0.466 |

| BI3 | 0.354 | 0.808 | 0.613 | 0.387 | 0.109 | 0.510 | 0.509 |

| BI4 | 0.179 | 0.785 | 0.561 | 0.321 | 0.195 | 0.409 | 0.534 |

| ELA1 | 0.362 | 0.632 | 0.821 | 0.333 | 0.167 | 0.485 | 0.551 |

| ELA2 | 0.207 | 0.563 | 0.803 | 0.237 | 0.129 | 0.426 | 0.508 |

| ELA3 | 0.274 | 0.468 | 0.741 | 0.263 | 0.105 | 0.314 | 0.399 |

| ELA4 | 0.245 | 0.497 | 0.748 | 0.271 | 0.197 | 0.372 | 0.423 |

| GP1 | 0.531 | 0.484 | 0.334 | 0.889 | -0.064 | 0.578 | 0.279 |

| GP2 | 0.455 | 0.415 | 0.348 | 0.890 | 0.004 | 0.531 | 0.329 |

| GP3 | 0.468 | 0.221 | 0.173 | 0.749 | -0.140 | 0.371 | 0.049 |

| INST1 | -0.107 | 0.155 | 0.135 | -0.005 | 0.863 | 0.024 | 0.238 |

| INST2 | -0.210 | 0.160 | 0.183 | -0.092 | 0.872 | 0.039 | 0.297 |

| INST3 | -0.181 | 0.125 | 0.170 | -0.061 | 0.779 | -0.007 | 0.208 |

| OS1 | 0.550 | 0.524 | 0.421 | 0.572 | -0.001 | 0.836 | 0.475 |

| OS2 | 0.397 | 0.461 | 0.401 | 0.465 | 0.049 | 0.867 | 0.510 |

| OS3 | 0.445 | 0.467 | 0.391 | 0.537 | 0.006 | 0.805 | 0.406 |

| OS4 | 0.444 | 0.567 | 0.531 | 0.471 | 0.030 | 0.877 | 0.521 |

| TS1 | 0.392 | 0.516 | 0.535 | 0.350 | 0.163 | 0.469 | 0.737 |

| TS2 | 0.056 | 0.432 | 0.399 | 0.098 | 0.309 | 0.420 | 0.794 |

| TS3 | 0.187 | 0.550 | 0.491 | 0.280 | 0.157 | 0.493 | 0.805 |

| TS4 | 0.051 | 0.503 | 0.457 | 0.155 | 0.316 | 0.371 | 0.773 |

Source: authors’ own work

Table 5Discriminant Validity: Using Fornell Lacker Criterion

| ACC | BI | EL | GP | INST | OS | TS | |

| ACC | 0.829 | ||||||

| BI | 0.363 | 0.780 | |||||

| EL | 0.352 | 0.700 | 0.779 | ||||

| GP | 0.569 | 0.472 | 0.356 | 0.845 | |||

| INST | 0.196 | 0.176 | 0.193 | 0.062 | 0.839 | ||

| OS | 0.544 | 0.600 | 0.520 | 0.603 | 0.024 | 0.846 | |

| TS | 0.228 | 0.649 | 0.610 | 0.292 | 0.297 | 0.567 | 0.778 |

Source: extracted from PLS-SEM Software.

The bold diagonal entries report the square roots of the average variance extracted (AVE) for each construct, while the remaining values represent inter-construct correlations. Discriminant validity refers to the extent to which the items associated with a given construct measure that construct rather than other constructs, thereby indicating clear separation between constructs. Discriminant validity was assessed using cross-loadings (Table 4) and the Fornell–Larcker criterion (Table 5). In Table 5, the diagonal values (√AVE) exceed the corresponding inter-construct correlations, indicating that TS, GP, OS, INST and ACC are empirically distinct. Accordingly, each construct is measured using a distinct set of indicators.

Structural Model Assessment

Structural model assessment is a vital step in testing hypotheses about latent constructs (Bagozzi & Yi, 1988). Structural relationships are evaluated by analysing path coefficients, p-values, and t-values, together with f² (effect size) and R², after establishing reliability and validity of the outer model. The current model assesses the relationships between ACC and BI, OS and BI, TS and BI, INST and BI, GP and BI, and BI and ELA.

Table 6Hypothesis Testing

| Hypothesis | Relationship | β | SD | t-value | p-value | Decision |

| H1 | ACC > BI | 0.055 | 0.060 | 0.922 | 0.357 | Rejected |

| H2 | OS > BI | 0.197 | 0.073 | 2.686 | 0.007 | Supported |

| H3 | GP > BI | 0.194 | 0.062 | 3.142 | 0.002 | Supported |

| H4 | INST > BI | 0.061 | 0.046 | 1.330 | 0.184 | Rejected |

| H5 | TS > BI | 0.450 | 0.054 | 8.268 | 0.000 | Supported |

| H6 | BI > ELA | 0.700 | 0.035 | 20.118 | 0.000 | Supported |

Source: authors’ own work from PLS-SEM software.

The empirical assessment of the hypothesised structural paths in the PLS-SEM model (Table 6) indicates mixed effects of the latent constructs on behavioural intention and e learning adoption. The path from Accessibility to Behavioural Intention (H1: β = 0.055, p = 0.357) is not statistically significant, suggesting that the mere availability of, or ease of access to, technological infrastructure does not, in itself, materially increase users’ intention to engage with online education systems. This is consistent with UTAUT (Venkatesh et al., 2003), which implies that facilitating conditions may not exert a direct effect when other antecedents are more salient. By contrast, Organisational Support has a statistically significant positive effect on Behavioural Intention (H2: β = 0.197, p = 0.007), underscoring the importance of responsive and robust support in strengthening user confidence, technological self-efficacy, and perceptions of platform reliability; this, in turn, fosters stronger behavioural intention. Literature emphasised the role of academic and technical scaffolding in virtual learning environments.

Furthermore, Government Policy shows a significant positive effect on Behavioural Intention (H3: β = 0.194, p = 0.002), indicating that macro-level regulatory and institutional frameworks that endorse, incentivise, or mandate digital transformation act as important external motivators that legitimise e learning and align stakeholders with national priorities, consistent with Alshammari et al. (2016) on the enabling role of policy interventions in technology assimilation. In contrast, Instructor Attitude does not show a significant direct effect (H4: β = 0.061, p = 0.184), suggesting a diminished, indirect, or mediated role of instructors in shaping behavioural intention in contexts where students are comparatively autonomous and technologically experienced, which challenges the traditionally central role emphasised by Rodríguez-Ardura and Meseguer-Artola (2016).

Technostress has a strong and highly significant positive association with Behavioural Intention (H5: β = 0.450, p = 0.000), indicating that students may experience technology-related challenges as manageable and even motivating when supported by adequate digital literacy and institutional infrastructure; this implies that technostress may sometimes function as a ‘challenge stressor’ rather than a ‘hindrance stressor’, prompting engagement rather than avoidance.

This reinforces the central premise of the TAM and its extensions (Davis et al., 1989): enhancing users’ competence and reducing technological anxiety through structured pedagogical interventions and user empowerment initiatives have a pronounced and unambiguous effect on the formation of intention. This, in turn, indicates that institutional administrators should invest strategically in sustained training provision and proactive technical assistance protocols. Finally, H6 shows a strong statistical association between Behavioural Intention and E learning Adoption (β = 0.700, p = 0.000), confirming intention as the most proximal antecedent of actual behaviour and underscoring the importance of cultivating positive attitudinal, normative and control beliefs to catalyse active engagement with e learning platforms.

Existing evidence suggests that when access to digital infrastructure and devices is highly standardised within an institution or region, it varies little and is therefore a weaker statistical predictor (Aboagye et al., 2021; Adarkwah, 2021). Similar findings have been reported in multiple pandemic related studies: once a baseline level of access has been achieved, students tend to treat accessibility as an expected factor rather than a differentiator, and psychological variables such as technostress or self efficacy become more important in shaping the learning experience (Hodges et al., 2020; Mohammadi, 2015). Our sample reflects the same pattern: institutional provision and the general availability of devices have resulted in most students reporting uniformly high accessibility scores, producing a limited range effect.

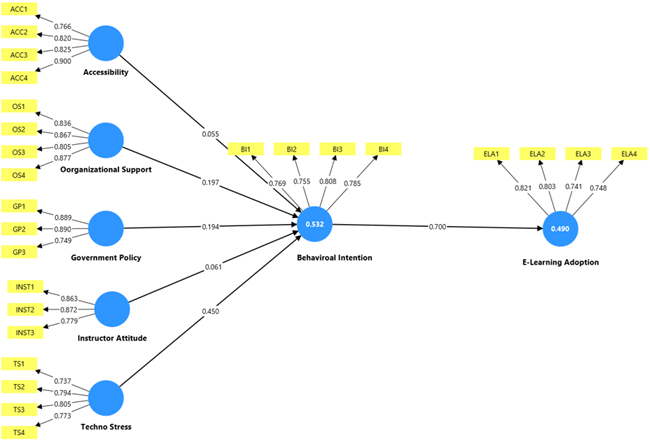

Figure 2E Learning Adoption Model

Source: extracted from PLS–SEM Software.

To assess the model’s predictive capacity, R2 values were examined. The R2 for Behavioural Intention is 0.530, indicating that 53.2% of the variance is explained by accessibility, organisational support, government policy, instructor attitude, and technostress, as shown in Figure 2, reflecting moderate-to-substantial predictive power. The R2 for E Learning Adoption is 0.490, indicating that behavioural intention alone explains 49% of the variance in adoption. The f2 values for each predictor indicate the contribution of each factor to the explained variance (Table 7).

Table 7F2 Values

| Predictor | Dependent | f2 |

| ACC → BI | 0.004 | Very small (almost negligible) |

| BI → EL | 0.96 | Very large effect |

| GP → BI | 0.044 | Small effect |

| INST → BI | 0.007 | Negligible |

| OS → BI | 0.035 | Small effect |

| TS → BI | 0.258 | Medium-to-large effect |

Source: authors’ own work from PLS-SEM software.

Managerial Implications

The findings indicate that organisational support, government policy, and technostress are particularly important in shaping learners’ intention to use e learning, whereas accessibility and instructor attitude do not show direct effects. Institutions should therefore focus on building strong support systems. Organisational assistance (administrative support, specialist help, training, and resource provision) has clear implications for learners’ ability to use e learning effectively and to build competence. Supportive environments enable faculty and students to reduce hurdles and foster a culture that values technology (Baber, 2021). Continuous professional development and readily available support can increase users’ confidence and capability when using e learning platforms.

Policy-makers should also ensure that e learning policies are clear, stable, and backed by appropriate resources, as such policies create an enabling ecosystem that supports platform use and long-term adoption (Nagy & Duma, 2023). Since technostress emerged as a strong positive predictor, managers should adopt structured digital competency training, balanced workload practices, and stress-reduction measures so that students experience technology as manageable and beneficial rather than overwhelming (Penado Abilleira et al., 2020).

Although baseline accessibility and instructor attitude were not statistically significant, institutions must still ensure minimum standards of digital infrastructure and provide professional development for instructors, as these remain prerequisites for sustainable e learning provision (Ramhith & Lallmahomed, 2024). Finally, since behavioural intention is a key predictor of actual uptake, strengthening user motivation and the perceived value of online learning should be central to institutional strategy. The present study considers these variables to assess their influence on improving e learning adoption and supporting equitable access to education.

Conclusion and Future Scope

E learning is an important aspect of contemporary education, as it supports flexibility and accessibility and helps to overcome geographical, economic, and social boundaries. By integrating technology into teaching and learning environments, institutions can provide more inclusive and efficient educational experiences. This shift highlights the importance of identifying the main factors that determine the effective implementation of virtual learning environments, as understanding these determinants is crucial to improving student engagement and the overall e learning experience.

This article examines the antecedents of behavioural intention to use e learning platforms—Accessibility, Government Policy, Organisational Support, Instructor Attitude and Technostress—which, in turn, influence E Learning Adoption behaviour. The measurement model was assessed for reliability and validity, and the results were satisfactory: all constructs met the recommended thresholds (Cronbach’s alpha (α) > 0.7; composite reliability (CR) > 0.85; average variance extracted (AVE) > 0.6), and the VIF values for all measurement items were below 5 (Table 3), indicating no multicollinearity concerns. This suggests that the indicators represent their respective constructs well. Discriminant validity was also supported, indicating that the items for each construct relate to that construct only and not to other constructs; hence, each construct is clearly differentiated. None of the constructs (TS, GP, OS, INST and ACC) overlaps with the others. This was established using the Fornell–Larcker criterion, whereby all diagonal values exceed the inter-construct correlations, suggesting that each construct is distinct from the others, as shown in Tables 4 and 5. Thus, each construct differs from the others and is measured by different indicators/items.

The structural model indicates that Government Policy (β = 0.194, p = 0.002), Technostress (β = 0.450, p < 0.001), and Organisational Support (β = 0.197, p = 0.007) have significant and positive effects on behavioural intention to use e learning platforms.

With respect to Hypothesis 3, the relationship between Government Policy and behavioural intention aligns with elements of Institutional Theory such as coercive pressures, compatibility, and observability. Clear policies, mandates, or incentives from government bodies create an environment in which e learning is perceived as legitimate, necessary, and aligned with broader educational reforms. Learners respond to this institutional climate by forming stronger intentions to adopt e learning. Government Policy therefore emerges as a structural antecedent that reinforces the institutional layer of the conceptual model.

Organisational Support is grounded in UTAUT’s facilitating conditions and Institutional Theory’s normative pressures. This result confirms that when institutions provide training, technical support, and encouragement, learners feel more confident and motivated to use e learning. Organisational support signals legitimacy and reduces perceived effort, thereby strengthening intention. The finding supports the framework’s institutional dimension by showing that organisational structures and norms meaningfully shape learner behaviour, thus addressing research objective 2.

Among these factors, Technostress has the largest effect, with a medium-to-large effect size (f2 = 0.258; Table 7), indicating that higher levels of technology-related pressure are associated with stronger intentions among students to continue using e learning platforms. The results show that, although technology can create stress – such as pressure to work faster, difficulty learning new tools, interference with personal time, and system failures – Technostress still significantly increases intention to use e learning (β = 0.450, p < 0.001). In line with Stress–Strain–Outcome theory, these technology-related stressors create strain, but the outcome is not necessarily withdrawal; instead, students may feel compelled to continue using technology because it is essential for completing coursework, accessing materials, and meeting academic expectations. This can be described as necessity-driven or compliance driven adoption. BI acts as the bridge between stress and actual behaviour. Thus, Hypothesis 5 is supported, validating the psychological dimension of the conceptual framework and highlighting Technostress as a critical factor shaping e learning adoption.

By contrast, the current study found non-significant effects for Instructor Attitude (β = 0.061, p = 0.184) and Accessibility (β = 0.055, p = 0.357), with weak effect sizes (f2 = 0.007 and 0.004, respectively).

Although accessibility is often linked to perceived ease of use in TAM and facilitating conditions in UTAUT, the non-significant effect suggests that learners may no longer perceive accessibility as a barrier. In many educational contexts, baseline access to devices and internet connectivity has become normalised. Accessibility may therefore function as a hygiene factor – necessary, but not sufficient to shape intention. Once minimum access is in place, other motivational and institutional factors appear to become more decisive. This finding refines the conceptual framework by indicating that contextual enablers such as accessibility do not automatically translate into intention unless accompanied by institutional or psychological drivers. Accordingly, Hypothesis 1 is not supported.

Instructor attitude is identified as a key social influence within UTAUT, but the non-significant result suggests that learners may not rely heavily on instructor cues when forming intentions. This may occur when learners are already familiar with digital tools or where peer and institutional influences outweigh instructor attitudes. It may also indicate that instructor endorsement is not strongly communicated. This finding nuances the psychological dimension of the framework by showing that not all sources of social influence carry equal weight in shaping intention. Accordingly, Hypothesis 4 is not supported.

The non-significant results for accessibility and instructor attitude may reflect uniformly high accessibility enabled by institution-wide digital infrastructure provision, together with consistently positive instructor behaviour reported by most students. Where such variables show limited variation, their predictive relationship with learning outcomes is weakened.

Behavioural intention has a significant impact on E Learning Adoption behaviour, with a large effect size (f2 = 0.96), highlighting its central role in translating the effects of exogenous constructs into actual use behaviour. The R² value for BI is 0.530, indicating that 53.2% of the variance is explained by Accessibility, Organisational Support, Government Policy, Instructor Attitude, and Technostress (Figure 2), which represents moderate-to-strong explanatory power. The R2 value for E Learning Adoption is 0.490, indicating that behavioural intention alone explains 49% of the variance in adoption. The f2 values for each predictor (Table 7) indicate the contribution of each independent variable to the dependent variables’ explained variance, which is acceptable in behavioural research.

Consistent with TAM, UTAUT, and DOI, intention remains the most powerful proximal determinant of actual behaviour. This confirms that the antecedents examined in this study i.e. organisational support, government policy, and technostress are ultimately influence adoption by affecting intention. This finding completes the conceptual framework by validating the intention–behaviour link central to technology acceptance theories.

In summary, the results highlight Technostress, Government Policy, and Organisational Support as key antecedents of behavioural intention to use an online learning platform in both initial and continued practice. These findings can guide policy-makers and higher education institutions in strengthening such capabilities to support e learning adoption as technology-mediated learning becomes increasingly essential. Such progress requires personalised and adaptive learning systems that improve educational effectiveness by tailoring content to learners’ specific needs. The integration of gamification tools and other interactive engagement methods may also improve outcomes without the environmental costs associated with physical infrastructure or printed materials.

Overall, the results show that e learning adoption is shaped by a multi-layered system of influences. First, institutional antecedents such as government policy and organisational support significantly strengthen intention, whereas instructor attitude does not exert the expected social influence. Second, psychological antecedents such as Technostress are the strongest predictors, underscoring the importance of emotional and cognitive strain in technology acceptance. Third, contextual antecedents such as accessibility do not significantly shape intention, suggesting that contextual readiness alone is insufficient. Finally, behavioural intention strongly predicts actual adoption, supporting the theoretical foundations of the model.

Future research could explore the moderating role of government policies and schemes in colleges’ and universities’ initiation and implementation of online education, as such measures can encourage HEIs to respond more rapidly and expand the reach of virtual education. During COVID-19, certain government bodies provided mobile phones and computers to eligible students through higher education institutions to facilitate access to learning platforms. Further work could also examine causal relationships between government initiatives and institutional readiness.

References

- Aboagye, E., Yawson, J. A., & Appiah, K. N. (2021). COVID-19 and E-learning: The challenges of students in tertiary institutions. Social Education Research, 2(1), 1–8. https://doi.org/10.37256/ser.122020422

- Adarkwah, M. A. (2021). “I’m not against online teaching, but what about us?”: ICT in Ghana post COVID-19. Education and Information Technologies, 26, 1665–1685. https://doi.org/10.1007/s10639-020-10331-z

- Ahmad, N., Quadri, N. N., Qureshi, M. R. N., & Alam, M. M. (2018). Relationship modeling of critical success factors for enhancing sustainability and performance in E-learning. Sustainability, 10(12), 4776. https://doi.org/10.3390/SU10124776

- Al-Hunaiyyan, A., Alhajri, R., & Bimba, A. (2021). Towards an efficient integrated distance and blended learning model: How to minimise the impact of COVID-19 on education. International Journal of Interactive Mobile Technologies, 15(10), 173–193. https://doi.org/10.3991/ijim.v15i10.21331

- Al-Maroof, R. S., Alhumaid, K., & Salloum, S. (2021). The continuous intention to use e-learning, from two different perspectives. Education Sciences, 11(1), 1–20. https://doi.org/10.3390/educsci11010006

- Alajmi, Q., Arshah, R. A., Kamaludin, A., & Al-Sharafi, M. A. (2018). Current state of cloud-based e-learning adoption: Results from Gulf Cooperation Council’s Higher education institutions. 2018 IEEE 9th Annual Information Technology, Electronics and Mobile Communication Conference (IEMCON), 569–575. Institute of Electrical and Electronics Engineers Inc. https://doi.org/10.1109/IEMCON.2018.8614772

- Alkhalaf, S., Drew, S., & Alhussain, T. (2012). Assessing the impact of e-learning systems on learners: A survey study in the KSA. Procedia - Social and Behavioral Sciences, 47, 98–104. https://doi.org/10.1016/J.SBSPRO.2012.06.620

- Alyoussef, I. Y. (2022). Acceptance of a flipped classroom to improve university students’ learning: An empirical study on the TAM model and the unified theory of acceptance and use of technology (UTAUT). Heliyon, 8(12), e12529. https://doi.org/10.1016/j.heliyon.2022.e12529

- Ameen, N., Willis, R., Abdullah, M. N., & Shah, M. (2019). Towards the successful integration of e-learning systems in higher education in Iraq: A student perspective. British Journal of Educational Technology, 50(3), 1434–1446. https://doi.org/10.1111/bjet.12651

- Anwar, S. A., Sohail, M. S., & Al Reyaysa, M. (2020). Quality assurance dimensions for e-learning institutions in Gulf countries. Quality Assurance in Education, 28(4), 205–217. https://doi.org/10.1108/QAE-02-2020-0024

- Baber, H. (2021). Modelling the acceptance of e-learning during the pandemic of COVID-19-A study of South Korea. International Journal of Management Education, 19(2), 100503. https://doi.org/10.1016/J.IJME.2021.100503

- Bagozzi, R. P., & Yi, Y. (1988). On the evaluation of structural equation models. Journal of the Academy of Marketing Science, 16(1), 74–94. https://doi.org/10.1007/BF02723327

- Campbell, D. T., & Fiske, D. W. (1959). Convergent and discriminant validation by the multitrait-multimethod matrix. Psychological Bulletin, 56(2), 81–105. https://doi.org/10.1037/H0046016

- Chu, T.-H., & Chen, Y.-Y. (2016). With Good We Become Good: Understanding e-learning adoption by theory of planned behavior and group influences. Computers and Education, 92–93, 37-52. https://doi.org/10.1016/j.compedu.2015.09.013

- Coluci, M. Z. O., Alexandre, N. M. C., & Milani, D. (2015). Construção de instrumentos de medida na área da saúde [Construction of measurement instruments in the area of health]. Ciência & Saúde Coletiva, 20(3), 925–936. https://doi.org/10.1590/1413-81232015203.04332013

- Davis, F. D. (1989). Perceived usefulness, perceived ease of use, and user acceptance of information technology. MIS Quarterly, 13(3), 319–340. https://doi.org/10.2307/249008

- DiMaggio, P. J., & Powell, W. W. (1983). The iron cage revisited: Institutional isomorphism and collective rationality in organizational fields. American Sociological Review, 48(2), 147–160. https://www.jstor.org/stable/2095101

- Dhapte, A. (2025). Academic e-learning market. Market Research Future. https://www.marketresearchfuture.com/reports/academic-e-learning-market-23650

- El Alfy, S., Gómez, J. M., & Ivanov, D. (2017). Exploring instructors’ technology readiness, attitudes and behavioral intentions towards e-learning technologies in Egypt and United Arab Emirates. Education and Information Technologies, 22(5), 2605–2627. https://doi.org/10.1007/S10639-016-9562-1

- Elameer, A. S. (2021). COVID-19 and real e-government and e-learning adoption in Iraq. 4th International Iraqi Conference on Engineering Technology and Their Applications (IICETA), 323–329. https://doi.org/10.1109/IICETA51758.2021.9717570

- Gibreel, O., & Abdalla, A. (2024). Electronic learning and the digital divide in Sudan: A sustainable development approach for e-learning adoption amidst pandemics and civil unrest. In A. Ahmed (Ed.), World Sustainable Development Outlook 2024 (pp. 65-83). https://doi.org/10.47556/B.OUTLOOK2024.22.7

- Hassanzadeh, A., Kanaani, F., & Elahi, S. (2012). A model for measuring e-learning systems success in universities. Expert Systems with Applications, 39(12), 10959–10966. https://doi.org/10.1016/J.ESWA.2012.03.028

- Herath, C. P., Weerakkody, W. J. S. K., & Gunarathne, W. K. T. M. (2015). Infrastructure factors affection for E-learning practice of students in Wayamba University of Sri Lanka: Case study: Makandura premises. 2015 8th International Conference on Ubi-Media Computing (UMEDIA), 196–201. https://doi.org/10.1109/UMEDIA.2015.7297454

- Hernández-Ramos, J. P., Martínez-Abad, F., García Peñalvo, F. J., Esperanza Herrera García, M., & Rodríguez-Conde, M. J. (2014). Teachers’ attitude regarding the use of ICT. A factor reliability and validity study. Computers in Human Behavior, 31(1), 509–516. https://doi.org/10.1016/J.CHB.2013.04.039

- Hodges, C., Moore, S., Lockee, B., Trust, T., & Bond, A. (2020, March 27). The difference between emergency remote teaching and online learning. Educause Review. https://er.educause.edu/articles/2020/3/the-difference-between-emergency-remote-teaching-and-online-learning

- House, J. S. (1981). Work stress and social support. Addison Wesley.

- Hou, M., Lin, Y., Shen, Y., & Zhou, H. (2022). Explaining pre-service teachers’ intentions to use technology-enabled learning: An extended model of the theory of planned behavior. Frontiers in Psychology, 13. https://doi.org/10.3389/FPSYG.2022.900806

- Hwang, S., & Kim, H. K. (2022). Development and validation of the e-learning satisfaction scale (eLSS). Teaching and Learning in Nursing, 17(4), 403–409. https://doi.org/10.1016/J.TELN.2022.02.004

- Jameel, A. S., Karem, M. A., Aldulaimi, S. H., Muttar, A. K., & Ahmad, A. R. (2022). The acceptance of E-learning service in a higher education context. Lecture Notes in Networks and Systems, 299, 255–264. https://doi.org/10.1007/978-3-030-82616-1_23

- Jamil, L. S. (2017). Assessing the behavioural intention of students towards Learning Management System, through technology acceptance model - case of Iraqi universities. Journal of Theoretical and Applied Information Technology, 95(16), 3825–3840.

- Kanwal, F., & Rehman, M. (2014). E-learning adoption model: A case study of Pakistan. Life Science Journal, 11, 78–86.

- Karimian, Z., & Chahartangi, F. (2024). Development and validation of a questionnaire to measure educational agility: a psychometric assessment using exploratory factor analysis. BMC Medical Education, 24(1), 1284. https://doi.org/10.1186/S12909-024-06307-z

- Kaur, S., & Sehajpal, S. (2025). Digital maturity and ecosystem synergy: Unveiling the path to Sustainable Development Goals in SAARC countries. In C. Popescu (Ed.), Impacts of digital maturity and digital ecosystems on Sustainable Development Goals (pp. 189–224). IGI Global. https://doi.org/10.4018/979-8-3373-3700-5.ch006

- Kim, E. J., Kim, J. J., & Han, S. H. (2021). Understanding student acceptance of online learning systems in higher education: Application of social psychology theories with consideration of user innovativeness. Sustainability, 13(2), 896. https://doi.org/10.3390/SU13020896

- Kithsiri, U. G., Peiris, A. P. T. S., Wickramarathna, T., Amarawardhana, K., Abeyweera, R., Senanayake, N. N., Jayasuriya, J., & Fransson, T. H. (2018). A remote mode master degree program in sustainable energy engineering: Student perception and future direction. Advances in Intelligent Systems and Computing, 715, 673–683. https://doi.org/10.1007/978-3-319-73210-7_79

- Koeske, G. F., & Koeske, R. D. (1993). A preliminary test of a stress–strain–outcome model for reconceptualizing the burnout phenomenon. Journal of Social Service Research, 17(3–4), 107–135. https://doi.org/10.1300/J079v17n03_06

- Kong, S. C., Chan, T.-W., Huang, R., & Cheah, H. M. (2014). A review of E-Learning policy in school education in Singapore, Hong Kong, Taiwan, and Beijing: implications to future policy planning. Journal of Computers in Education, 1, 187–212. https://doi.org/10.1007/S40692-014-0011-0

- Lal, V., Kumbhar, V., & Varaprasad, G. (2024). Novel extension of the UTAUT model to assess e-learning adoption in higher education institutes: The role of study life quality. Knowledge Management and E-Learning, 16(1), 42–64. https://doi.org/10.34105/j.kmel.2024.16.002

- Lan, T., Chen, Y., Li, H., Guo, L., & Huang, J. (2021). From driver to enabler: the moderating effect of corporate social responsibility on firm performance. Economic Research-Ekonomska Istrazivanja, 34(1), 2240–2262. https://doi.org/10.1080/1331677X.2020.1862686

- Lyukevich, I., Agranov, A., Lvova, N., & Guzikova, L. (2020). Digital experience: How to find a tool for evaluating business economic risk. International Journal of Technology, 11(6), 1244–1254. https://doi.org/10.14716/ijtech.v11i6.4466

- Mashroofa, M. M., Haleem, A., Nawaz, N., & Saldeen, M. A. (2023). E-learning adoption for sustainable higher education. Heliyon, 9(6), e17505. https://doi.org/10.1016/j.heliyon.2023.e17505

- Mohammadiari, S., & Singh, H. (2015). Understanding the effect of e-learning on individual performance: The role of digital literacy. Computers & Education, 82, 11–25. https://doi.org/10.1016/j.compedu.2014.10.025

- Nagy, V., & Duma, L. (2023). Measuring efficiency and effectiveness of knowledge transfer in e-learning. Heliyon, 9(7), e17502. https://doi.org/10.1016/J.HELIYON.2023.E17502

- Nguyen, P. T., Nguyen, Q. L. H. T. T., Huynh, V. D. B., & Nguyen, L. T. (2024). E-learning quality and the learners’ choice using spherical fuzzy analytic hierarchy process decision-making approach. Vikalpa, 49(2), 143–156. https://doi.org/10.1177/02560909241255003

- Penado Abilleira, M., Rodicio-García, M. L., Ríos-de-Deus, M. P., & Mosquera-González, M. J. (2020). Technostress in Spanish university students: Validation of a measurement scale. Frontiers in Psychology, 11, 582317. https://doi.org/10.3389/fpsyg.2020.582317

- Rahayu, W., Putra, M. D. K., Faturochman, Meiliasari, Sulaeman, E., & Koul, R. B. (2021). Development and validation of Online Classroom Learning Environment Inventory (OCLEI): The case of Indonesia during the COVID-19 pandemic. Learning Environments Research, 25(1), 97-103. https://doi.org/10.1007/S10984-021-09352-3

- Ramhith, R. V., & Lallmahomed, M. Z. I. (2024). Secondary school teachers’ adoption of e-learning platforms in post COVID-19: A Unified Theory of Acceptance and Use of Technology (UTAUT) perspective. In A. Seeam, V. Ramsurrun, S. Juddoo, & A. Phokeer, (Eds.), Innovations and interdisciplinary solutions for underserved areas (pp. 264–277). https://doi.org/10.1007/978-3-031-51849-2_18

- Ringle, C. M., Wende, S., & Becker, J. M. (2024). SmartPLS 4. Scientific Research Publishing. https://www.smartpls.com

- Rodríguez-Ardura, I., & Meseguer-Artola, A. (2016). E-learning continuance: The impact of interactivity and the mediating role of imagery, presence and flow. Information and Management, 53(4), 504–516. https://doi.org/10.1016/j.im.2015.11.005

- Rowell, L. (2010). How government policy drives e-learning. Elearn Magazine, 10(3). https://doi.org/10.1145/1872818.1872821

- Sangwan, A., Sangwan, A., & Punia, P. (2021). Development and validation of an attitude scale towards online teaching and learning for higher education teachers. TechTrends, 65(2), 187–195. https://doi.org/10.1007/S11528-020-00561-W

- Sehajpal, S., Lata, K., & Soti, P. (2025). Culturally attuned digital transformation in Education: Integrating femtech for inclusive learning ecosystems. In S. Du, M. Sanmugam, N. Mohd Barkhaya, C. Chen, & J. Wang (Eds.), Cultural considerations for effective digital transformation in education (pp. 253-298). IGI Global. https://doi.org/10.4018/979-8-3373-3673-2.ch010

- Shah, S., Mehta, N., & Sunil, A. (2025). Investigation of e-learning adoption in higher education based on the unified theory of acceptance and use of technology model. E-Learning and Digital Media, 22(2), 171–192. https://doi.org/10.1177/20427530241232493

- Singh, M. (2020). E-learning industry Saudi Arabia, growth analysis. Ken Research. https://www.kenresearch.com/industry-reports/saudi-arabia-e-learning-market

- Singh, M., Adebayo, S. O., Saini, M., & Singh, J. (2021). Indian government E-learning initiatives in response to COVID-19 crisis: A case study on online learning in Indian higher education system. Education and Information Technologies, 26(6), 7569–7607. https://doi.org/10.1007/S10639-021-10585-1

- Singh, N., & Sehajpal, S. (2025). Economic impact of demographic shifts amid labor automation and policy inertia: Aging vs. youth in an automated global economy. In B. Christiansen, & J. Branch (Eds.), Critical Economic Implications of Global Demographic Changes (pp. 149-180). IGI Global. https://doi.org/10.4018/979-8-3373-2550-7.ch006

- Singh, V., & Thurman, A. (2019). How many ways can we define online learning? A Systematic literature review of definitions of online learning (1988-2018). American Journal of Distance Education, 33(4), 289–306. https://doi.org/10.1080/08923647.2019.1663082

- Ssemugenyi, F., & Nuru Seje, T. (2021). A decade of unprecedented e-learning adoption and adaptation: Covid-19 revolutionizes teaching and learning at Papua New Guinea University of Technology (PNGUoT): “Is it a wave of change or a mere change in the wave?”. Cogent Education, 8(1). https://doi.org/10.1080/2331186X.2021.1989997

- Taber, K. S. (2018). The use of Cronbach’s Alpha when developing and reporting research instruments in science education. Research in Science Education, 48(6), 1273–1296. https://doi.org/10.1007/S11165-016-9602-2

- Tarafdar, M., Tu, Q., Ragu-Nathan, T. S., & Ragu-Nathan, B. S. (2011). Crossing to the dark side: Examining creators, outcomes, and inhibitors of technostress. Communications of the ACM, 54(9), 113–120. https://doi.org/10.1145/1995376.1995403

- Teo, T., Lee, C. B., & Chai, C. S. (2008). Understanding pre-service teachers’ computer attitudes: Applying and extending the technology acceptance model. Journal of Computer Assisted Learning, 24(2), 128–143. https://doi.org/10.1111/J.1365-2729.2007.00247.X

- Teo, T. S. H., Kim, S. L., & Jiang, L. (2020). E-Learning implementation in South Korea: integrating effectiveness and legitimacy perspectives. Information Systems Frontiers, 22(2), 511–528. https://doi.org/10.1007/S10796-018-9874-3

- Tom, A. M., Virgiyanti, W., & Rozaini, W. (2019). Understanding the determinants of infrastructure-as-a service-based E-learning adoption using an integrated TOE-DOI model: A Nigerian perspective. International Conference on Research and Innovation in Information Systems (ICRIIS). https://doi.org/10.1109/ICRIIS48246.2019.9073418

- Venkatesh, V., Morris, M. G., Davis, G. B., & Davis, F. D. (2003). User acceptance of information technology: Toward a unified view. MIS Quarterly, 27(3), 425–478. https://doi.org/10.2307/30036540

- Wen, W., Chen, Y. H., & Pao, H. H. (2008). A mobile knowledge management decision support system for automatically conducting an electronic business. Knowledge-Based Systems, 21(7), 540–550. https://doi.org/10.1016/j.knosys.2008.03.029

- Xian, X. (2019). Empirical investigation of E-learning adoption of university teachers: A PLS-SEM approach. In S. Cheung, J. Jiao, LK Lee, LK., X. Zhang, K. Li, & Z. Zhan (Eds.), Technology in Education: Pedagogical Innovations, 1048, 169–178. Springer. https://doi.org/10.1007/978-981-13-9895-7_15

- Yang, P., & Qian, S. (2025). The factors affecting students’ behavioral intentions to use e-learning for educational purposes: A study of physical education students in China. SAGE Open, 15(1). https://doi.org/10.1177/21582440251313654

- Yi, Y., Li, G., Chen, T., Wang, P., & Luo, H. (2024). Investigating the factors that sustain college teachers’ attitude and behavioral intention toward online teaching. Sustainability 2024, 16(6), 2286. https://doi.org/10.3390/SU16062286

- Zhao, X., & Xue, W. (2023). From online to offline education in the post-pandemic era: Challenges encountered by international students at British universities. Frontiers in Psychology, 13. https://doi.org/10.3389/FPSYG.2022.1093475

https://orcid.org/0009-0008-1771-6095

https://orcid.org/0009-0008-1771-6095